HOMESCHOOL (Previously 'Regarding the Pain of SpotMini')

Regarding the Pain of SpotMini is part of an ongoing research into database accountability, parametric design workflows and their origins in systems of measurement and classification established in Europe during the 1500s.

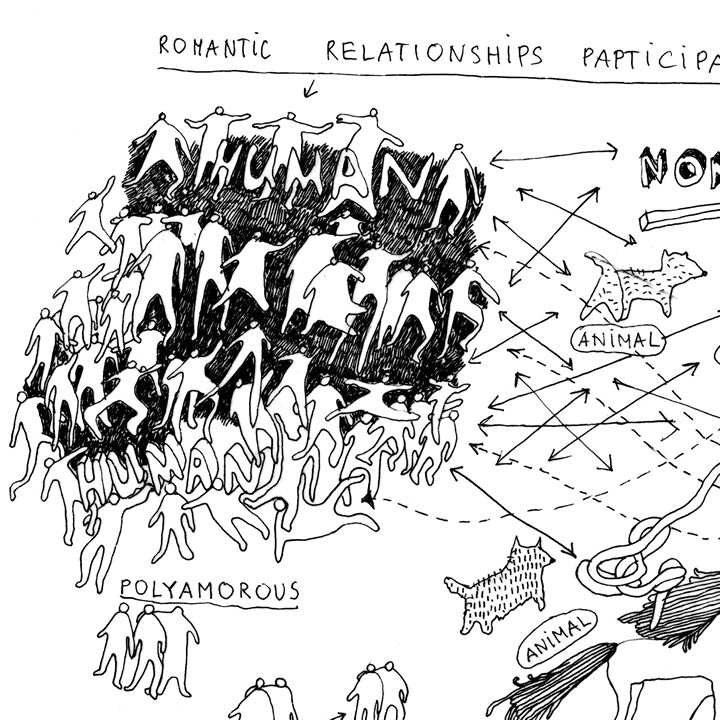

Autonomous systems are outfitted with a computer vision system with which they can navigate the built environment. Machine vision needs to be taught everything about the world it operates in: an autonomous robot navigating a domestic space needs to learn what a chair is, what a dining room is, what a human is. Large training databases of images are created for this purpose. A posthuman architecture of human and autonomous machine cohabitation reveals a hyperstandardized and exclusive architecture. What image is used to teach a computer vision system what a human is?

Simone C Niquille (CH, NL) is a designer and researcher based in Amsterdam. Her practice Technoflesh investigates the representation of identity and the digitization of biomass in the networked space of appearance. She holds a BFA in Graphic Design from Rhode Island School of Design and a M.A. in Visual Strategies from the Sandberg Instituut Amsterdam. She teaches Design Research at ArtEZ University of the Arts Arnhem and is a 2016 Fellow of Het Nieuwe Instituut Rotterdam. Simone C Niquille is commissioned contributor to the Dutch Pavilion at the 2018 Venice Architecture Biennale. Her current work investigates standards of living and being embedded in parametric design processes.

How has the prototype evolved over the last months?

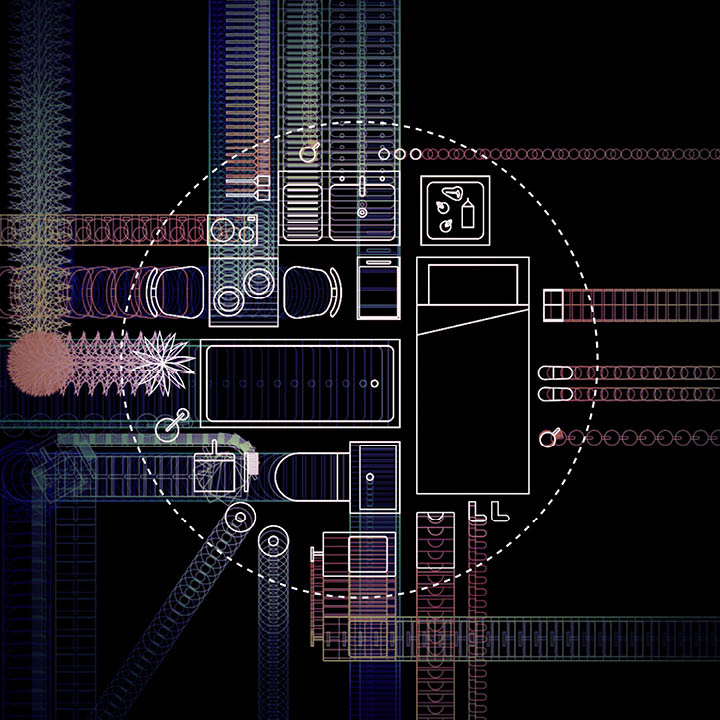

The presentation at CAFx will give insights into the processRegarding the Pain of SpotMini has evolved into an investigation into synthetic training dataset for indoor computer vision. The project analyzes collections of 3D files set into domestic scenographies, to test-drive robots and teach object recognition and indoor navigation. To generate architectural layouts, the contents of such datasets must be categorized into descriptive clusters. Similar to biological taxonomy, this organization of spaces and objects looks something like this: living room>couch>blanket; kitchen>cupboard>cutlery.

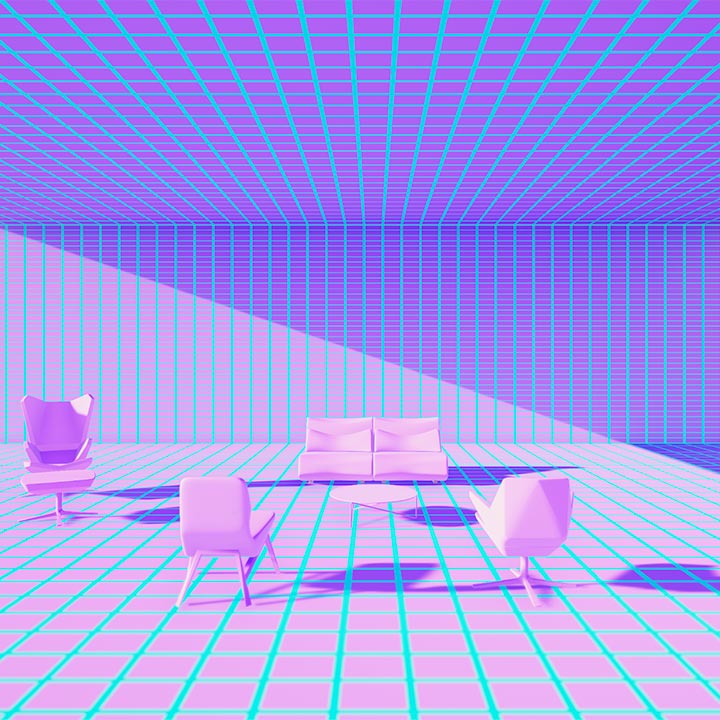

For one such dataset, while generating thousands of living room scenes, scientists decided for the living room to contain objects in the “box” category, perhaps expecting a chest or cigar box. Instead the living room was filled with mailboxes; the scenes discarded as faulty. This points to the challenges in building training datasets. Are chairs furniture with four legs and back rests, or any object for sitting? A virtual world void of orientation, scale, and organization will be the scenography for a documentary film on the 21st-century design object, the training dataset.

The Finished Prototype

At the Housing the Human Festival, the film HOMESCHOOL encapsulates the research into database accountability

HOMESCHOOL, (2019, 12:44 min.) is a 3D-animation film based on investigating synthetic training datasets for indoor computer vision. Household robots rely on computer vision to navigate domestic environments, but a camera does not know what it is looking at. To train robots to understand their future environment, large datasets of 3D files are virtually assembled into model homes. Set within a scenography assembled with the contents of one of the largest training datasets, SceneNet RGB-D, the short film makes visible the training data sealed within the resulting technology.

At the Housing the Human festival in radialsystem, the film will be presented as a scenographic screening, in which a virtual environment is explored by an unknown first-person narrator who’s learning by seeing, struggling to understand, while dwelling in ambiguities.

Sound: Jeff Witsche, voice: Kiara K.

Commissioned by Housing the Human

With generous support by:

Fotomuseum Winterthur

Swissnex San Francisco